I proposed a paper for this year’s Rocky Mountain Conference on Latin American Studies (RMCLAS) on the Google Books corpora and ngram viewer for Latin American historians. The conference is readily approaching, as in a few days from now, and so I’m writing this post to put a few of my thoughts together.

The publication in Science1 of a paper based on computational analysis of Google Book’s corpus together with a publicly accessible n-gram tool viewer caused quite a stir in the worlds of history and digital humanities. A google blog search of the terms “google n-gram” returns more 342,000 results (accessed 4/1/2011). Amongst the more academic of those returns, there has been a justifiable mixture of excitement and wariness over the prospects for a humanist mining of the corpus. (See, for example, Dan Cohen and Mike O’Malley for historians who are cautiously optimistic and crankily skeptical respectively. The response in other humanities-related fields has been in some cases much more caustic, particularly from those who see understanding culture as a disciplinary prerogative.) In part, some of the negative reactions are rooted in Michel, et.al. coining a neologism to describe their work– culturomics— meant to draw comparisons to the methods of genomics. It’s a silly word, as made-up academic words tend to be. Nonetheless, the positions both in favor and against the culturomics have usually centered on the English language corpus, which is the largest by far. (For an exception, see here.) The English corpus is the single largest, which is not surprising given then genesis of the corpora which I will speak about in a moment, and constitutes some 500 billion (with a ‘b’) individual words generated from approximately 4% of the English-language books ever published. This represents a fraction of the total books that Google has scanned or had delivered from publishers, which total something in the neighborhood of 11% of books ever published in 478 languages. The books in the full corpus date back to 1473, and total some 5 billion pages and 2 trillion words. It is impressive in scale, to say the least, though not all of those books are included yet in the n-gram corpus.2

But, we’re at a conference on Latin American studies, so let’s turn to una de las otras corporas disponibles. In fact, what I want to talk about today is what the Google Books Corpus and the n-gram viewer have to offer to Latin American Historians. I’ll give a brief overview of how the corpus was constructed, why it’s cut up into n-grams, and what some of the uses of both Google Books and the n-gram viewer are for historical research on Latin American topics, as well as the limitations.

So, where did all this digitized text come from, and why was it carved up into groups of 1, 2, 3, 4, and 5 word phrases? The Google Books project has lived under a cloud of uncertainty with regard to copyrighted material, including orphaned works, that have been scanned in the project. That is, most books published after 1923 or so in the United States exist under some level of copyright protection, even when the holders of those rights can no longer be found.

For works prior to that (which are really the ones that interest me), they are held to be in the public domain, and one can download either a pdf or, frequently, an epub file of the work. This posed a problem for the ambitions of the culturomics people, because protected works could not be simply analyzed as whole texts. As a solution, the copora for the culturomics project were split into n-grams, datasets of every 1, 2, 3, 4, and 5 word phrase in the corpora. The datasets are huge, and are available for download directly from google. If you have a bunch of drive space and spare computing cycles lying around, you can download the full sets and run your own routines over them. I reckon that is a task currently beyond most in this room, though! At any rate, by splitting the texts into n-grams, the culturomics people could track the occurrence of phrases across time drawn from the entirety of the collection without regard to the copyright restrictions of the actual works involved. They’ve done this, and claim as a result to be able to track such a whole range of cultural and cultural-historic phenomena with “quantitative rigor”. For historians, much of this is problematic on the face of it, as contextual use of language is much, much more important than, say, orthographic change or raw frequencies.

There are also implications for the discoverable trends inherent in the selection biases of the corpora. In the case of old Spanish material, I don’t find this so troubling. The Spanish corpus largely came from scanning the libraries at the University of Michigan, Harvard University, the New York Public Library, Stanford University, Princeton University, and the Complutense University of Madrid. For academic researchers, the upside of this is that the collections are decidedly academic in their orientation. For public domain books, the corpus is very rich in law codes, legal commentaries, scientific and medical tracts, histories and chronicles, and, to a much lesser extent, golden age drama. For the nineteenth century, it is much the same with a heavy emphasis on work oriented in some fashion or another to the law and history. While this does put limitations on the type of trackable information in the public domain Spanish corpus, it still holds out loads of interesting potential searchability. (By the way, in its recent redesign, Google made the advanced book search page much more difficult to find. It’s still there, though.) All that said, the returns I get from using the google Spanish Corpus and Mark Davies’s Corpus de Español are frequently quite different, and Google is frequently more useful. N-gram plots of the Spanish corpus can be done with the Books Ngram Viewer. One can enter a series of words or phrases, up to 5-words-long each, chose a date range (defaulted to 1800-2000), and the language corpus. The Ngram Viewer will then plot the frequencies of those phrases across time, and provide links to see the term occurrences in the scanned books. From there, you can usually also download a copy of that book if its in the public domain.

At any rate, why don’t we go ahead a look at some n-gram examples just to see what the charts have to suggest (a word I choose on purpose).

We’ll start off with a couple of English phrases that suggest something to me about the nature of historiography in the past hundred years:

I would suggest that with this image we see a significant rise in the postwar conversation on the nature of historical objectivity and on the role of historical structures. Of course, that alone doesn’t tell us what historians may have believed about either of these central historiographical themes. That requires much more research. You will note, however, the explosion relative to the other two terms of ‘historical agency’ beginning in the 1980s, and with the rise of new cultural history. What do we make of its precipitant slide in the last few years?

As a historian of gender and the law, many of my examples today will point in those directions. And as a second English language teaser, I’ll plot patriarchy, homophobia, and heterosexism:

and also paternal and patriarchy:

You’ll note the similarity in the shape of the curves of patriarchy, homophobia, and heterosexism to that of historical agency. While this doesn’t tell us much that is concrete, it is compelling and would be an interesting jumping off point for work on the changing nature of historical production. But, on to some Spanish language historical plots.

mujer and muger:

We can, of course, follow orthographic shifts such as this plot which marks the movement from muger to mujer in the mid-19th century. This was also a period of standardization of names and other words, as orthographic regularity became important for new nation states.

machismo:

A month or two ago on h-net, John Womack asked about the early appearances of the term machismo. Through a google books search, I was able to nail down one of the earliest references to the word. According to the Google Books Corpus, ‘machismo’ began to appear in the 1890s in Spanish medical texts, directly referencing the new world: “Influjo del descubrimiento del nuevo mundo en las ciencias médicas: conferencia“.

Here are the search results for “machismo” in Spanish-language books between 1500 and 1940. You’ll note that the graph above does little to clarify this, except to demonstrate the escalating frequency of the term in the twentieth-century, and especially after 1960. But, also notions of machismo, and of the adjective/noun macho demonstrate the difficulties of decontextualized language in demonstrating much of anything on its own. Of course, during the colonial period a macho was a male animal.

macho:

bajo la ley:

Bajo la ley, or under the law, is a phrase associated with liberal conceptions of the rule of law and constitutionality. As this chart demonstrates, the concept took off at independence. But what of that earlier bump? Following the available link for the works that produced the bump, we find a series of some thirty-one works that are largely religious and philosophical in nature. What sort of searches could one construct to look for phrases that capture the relationship to the law under the empire? Certainly bajo la ley reads in this chart as an exemplar of the semantic shift of republicanism.

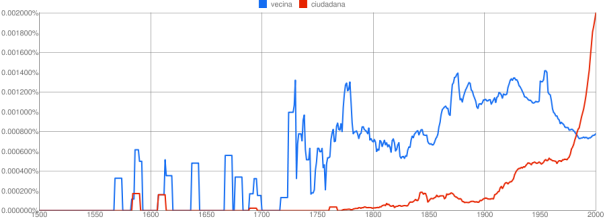

vecina and ciudadana:

vecino and ciudadano:

The above pair of graphs are suggestive of the changing relationship to citizenship that men and women had. Prior to independence, vecino/vecina carried in them a sense of legitimacy before the law as a bearer of citizenship rights. Not the explosion of ciudadano, the new male term for citizenship in the immediate revolutionary period. It’s absent for women, even though women did make claims to the title ciudadana in the 1820s in legal cases. It’s only in the quest for political equality in the second half of the 20th century, as notions of human rights and citizenship rights were claimed anew by women that ciudadana became more frequent.

constitución with various spellings:

I mentioned it in passing, but orthographic changes, including the use of diacritics or capitalization, make a difference in searching the n-gram datasets. Here we have constitución, constitucion, and Constitución.

Siete Partidas:

This graph of Siete Partidas demonstrates that the temporal weight of the corpus makes a big difference on appearances of a term. For some reason, there are no instances before roughly 1750 of Siete Partidas in the corpus. That said, it is interesting to see increased frequency of the title in the period that new Spanish-speaking nations were codifying their law, and apparently taking interesting in 13th century codes as well.

negro:

negro, esclavo, esclavitud:

muger legitima and mujer legitima:

maricon, sodomita, and crimen nefando:

A couple of years ago, I wrote a post on the etymology of the term maricón, following marica, maricon, and sodomita through the historical dictionaries of the Real Academica Español. In very few ways does that post really meet up with the above graph of maricon, sodomita, and crimen nefando, in part because most of the corpus doesn’t appear to go back earlier than 1750.

concubinato:

concubinato and adultero:

By comparison, these are the occurrences of concubinato prosecution in Quito at the end of the colonial period, as drawn out of the Criminales guide to the ANE:

What do we make of all of these graphs? Are they simply confirmatory of suspicion, of things we already knew? Maybe, or at least they are for me, and I chose the terms to demonstrate with a good idea of where they would go. That said, I think that the combination of the n-gram viewer, and of the massive collection of texts we can click through to in order to follow the contextualization of trends that the viewer identifies, offers a significant exploratory tool for historical investigation and teaching. One can imagine a range of uses for such a tool both in the classroom and outside the archive, as it were. In addition, I’ve tracked down some surprising connections through Google Books, including news of the happenings in Quito in August 1809 and 1810, published within a few months in newspapers in Baltimore and in Edinburgh, Scotland.

Alas, the short comings that most dog the usefulness of the n-gram viewer and Google Books more generally are in the metadata that Google uses to situation the datasets.

Nice, Chad. Since you split and OCR some of your own PDFs in your next post, you might be interested in creating your own n-gram analyses, too. Fortunately, you can do this with one line of Python. See my old post on Google as Corpus if you don’t already know how to do this. Best, Bill

That’s a nifty little line of code. I’ve mostly been using nltk for that sort of thing, but it’s often overkill.

[…] only recently gotten to know. I posted a conference report on SECOLAS in March. I published the text of a talk I gave on google’s ngram viewer for the Latin American history classroom. Finally, I posted […]